I Translated 3.9 Million Words in 4 Days with Parallel AI Agents

493 blog posts across 17 years, translated into 10 languages, ~4,900 files, ~3.9 million words. Claude Code's parallel agents made it possible — but the Korean disaster, the Cantonese voice problem, and the 5-hour usage cap taught me more than the successes.

A month ago, I added 5 languages to DIALØGUE in 48 hours and wrote about how planning documents are the secret to fast AI execution.

That was 5 languages for a single app with maybe 400 UI strings.

This week, I tried something much bigger: localizing my entire blog — 493 posts spanning 17 years — into 10 languages. Vietnamese, Indonesian, Spanish, French, Portuguese, German, Japanese, Korean, Simplified Chinese, and Cantonese.

~4,900 translated files. ~3.9 million words. 4 days.

This is not a story about AI being magical. It's a story about what actually happens when you throw thousands of translation tasks at parallel AI agents — the orchestration that works, the failures that don't show up until you read the output, and the uncomfortable truth about quality control when machines write at scale.

The Scale Problem

My blog goes back to 2007. Some posts are 300-word updates about search marketing in Singapore. Others are 5,000-word deep dives on US-China relations or expat relocation guides. The archive includes everything from Yahoo SEM analytics to book reviews to AI product launches.

Translating 493 posts manually into 9 languages would take a professional translation team weeks. Maybe months. And it would cost somewhere between "uncomfortable" and "refinance the house."

But I'd already built the i18n infrastructure — next-intl routing, locale-aware components, ISR for non-English pages — as part of a broader internationalization push. The technical stack was ready. I just needed the content.

Day 1: Vietnamese and the First Lessons

I started with Vietnamese because it's personal — it's my first language. I could read every translated post and immediately tell if something sounded wrong.

Claude Code translated all 493 posts in one session. The process looked clean:

- Read the English MDX file

- Translate the content, preserving all frontmatter structure

- Keep URLs, code blocks, and technical terms intact

- Write the translated file to

content/blog/vi/YYYY/MM/DD/slug.mdx - Sign off with the Vietnamese equivalent of "Cheers, Chandler"

The first QA pass caught the pattern that would haunt every language: URLs and sign-offs. The agent would "translate" internal blog URLs (turning /blog/2024/... into Vietnamese slugs that don't exist), replace "Chandler" with a Vietnamese name variant, and occasionally lose the frontmatter slug field entirely.

I built a QA checklist:

- File count matches source (493)

- No empty or stub files

- All frontmatter has

slug,title,date,categories - No rewritten internal URLs

- Sign-off matches the target locale's convention

- For CJK languages: native characters actually present

That checklist became the backbone of every subsequent locale.

Day 2: The Parallel Agent Breakthrough

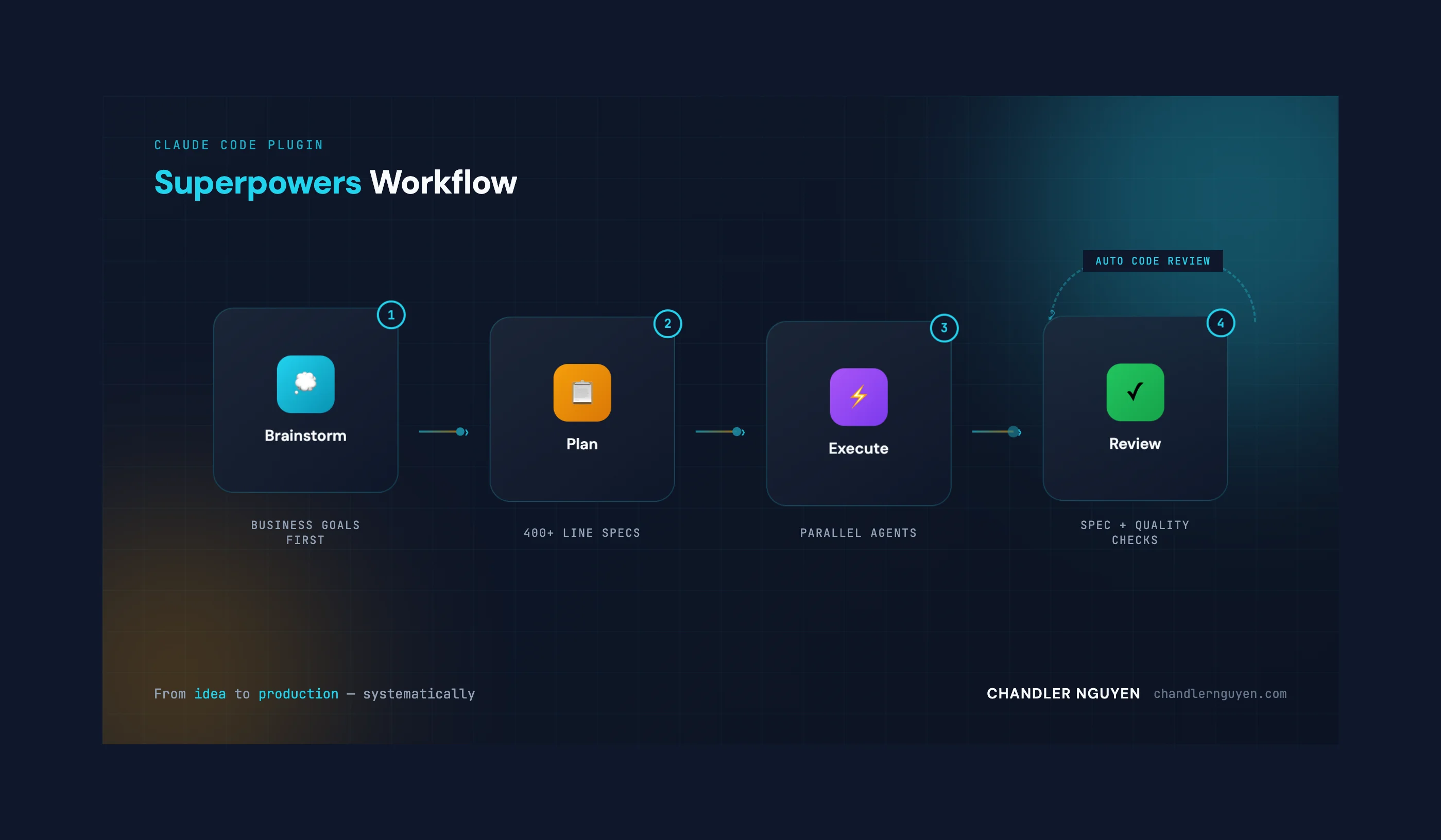

With Vietnamese done and the QA checklist proven, I needed to go faster. Claude Code's killer feature for this kind of work is parallel subagent dispatch — you can spin up multiple independent agents that each work on separate files simultaneously.

Here's what the orchestration looked like for Spanish:

Dispatching 30 translation agents...

├── Agent 1: 2007-2008 posts (42 posts)

├── Agent 2: 2009 posts (38 posts)

├── Agent 3: 2010-2011 posts (35 posts)

├── ...

├── Agent 28: 2025 Nov-Dec posts (12 posts)

├── Agent 29: 2026 Jan posts (8 posts)

└── Agent 30: 2026 Feb posts (9 posts)

Each agent got a batch of posts, the style guide, the QA checklist, and the sign-off convention. They ran in parallel, writing files independently. No coordination needed because each agent touches different files.

Spanish: 493 posts translated in one session. Then French. Then Portuguese. Then German. Then Japanese.

Five languages in roughly 12 hours. That's the part that feels like the future — watching your terminal fill with 30 simultaneous progress indicators, each agent churning through a decade of blog posts while you make dinner.

Here's a simulation of what the Cantonese (zh-HK) translation session actually looked like — from planning through dispatch to QA:

The Orchestration Pattern

The pattern that emerged:

- Pre-create all directories — agents fail silently if the target directory doesn't exist

- Batch by year range — 10-15 posts per agent for normal posts, 2-3 per agent for 3,000+ word posts

- Include the style guide in every dispatch — agents have no shared memory, so each one needs the full context

- Run QA after each locale completes — file count, empty files, broken frontmatter, URL rewrites, sign-off consistency

- Fix stragglers with targeted cleanup agents — there are always 5-15 posts that get missed or malformed

The Korean Disaster

Korean was supposed to be routine. Same pattern as the other 7 languages. Dispatch agents, wait, QA, fix stragglers.

Instead, it was the worst translation quality of any locale.

72% of the Korean posts were not translations — they were summaries. The agents had truncated 350+ posts into 2-3 paragraph abstracts, losing all the detail, all the personality, all the nuance. A 4,000-word analysis of Ray Dalio's "Principles" became a 200-word overview. A detailed SEM tutorial became "This post discusses search engine marketing strategies."

The UI strings file (messages/ko.json) was even worse. Mixed Korean and English throughout, with corrupted characters like "Ch및ler" instead of "Chandler." The 및 character is Korean for "and" — somehow the model substituted it mid-word.

I had to redo the entire locale from scratch. Every single post. The second attempt, with stricter instructions about preserving full content and matching the source post's length, came out clean.

Lesson: parallel execution amplifies mistakes. If your prompt has a subtle flaw, 30 agents will all make the same mistake 30 times faster. QA isn't optional — it's the only thing standing between "done" and "disaster."

The Chinese Problem: 43 Commits to Get Right

Simplified Chinese (zh) was the most labor-intensive locale. Not because of quality issues — the translations were good — but because 493 posts across 43 separate commits tells you the session kept hitting limits.

Claude Code runs on Anthropic's API, and there's a usage cap. Even on Max tier with Sonnet 4.6, extended sessions that dispatch dozens of parallel agents will eventually hit a 5-hour cooling period. For Chinese, that meant translating in waves: ~50 posts, hit the cap, wait, continue, hit the cap again.

The Chinese translations also needed the most QA because CJK content has unique failure modes:

- Characters from the wrong script (Japanese kanji mixed into Simplified Chinese)

- Overly formal register that reads like a government document instead of a blog

- Western punctuation instead of Chinese punctuation (,vs. , and 。vs. .)

- Translated names that shouldn't be translated ("Claude" is "Claude" in Chinese, not 克劳德)

The Cantonese Voice Problem

When I added Traditional Chinese with Cantonese voice (zh-HK), I faced a different challenge entirely. The translations needed to use Cantonese-specific particles — 嘅 (possessive), 咗 (past tense), 喺 (at/in), 啲 (some), 冇 (don't have) — and maintain the casual, conversational tone that defines Cantonese writing.

Standard Mandarin translations sound bureaucratic in Hong Kong. "本网站提供中文版本" is correct Mandarin, but a Cantonese reader expects "呢個網站有中文版本." Same meaning, completely different voice.

The challenge isn't translation accuracy — it's voice authenticity. The model can produce grammatically correct Cantonese, but it defaults to a Mandarin register unless you explicitly instruct it to use colloquial particles and code-mixing patterns.

My QA check for Cantonese included grepping every file for the presence of Cantonese-specific particles. 493 out of 493 passed. But I still had to manually rewrite the entire messages/zh-HK.json UI strings file because the first version was ~70% English — the agent had skipped most of the translations.

What I Tried with OpenAI Codex

Somewhere around the German translations, I hit Claude Code's usage cap and decided to try OpenAI's Codex while waiting. I had a free month of ChatGPT Plus, which includes Codex access.

The good: Codex produces solid planning documents. Its initial analysis of the codebase and proposed translation approach was well-structured and reasonable. Response times were fast. And it follows instructions closely — almost too closely, which can be a feature.

The bad: The version I used (gpt-5.2-codex) couldn't run parallel subagents. It processed posts sequentially — one at a time. For 493 posts, that's not viable. It also worked in short bursts, completing 5-10 posts before stopping to ask for feedback. Every time, I had to say "continue" to get the next batch.

When gpt-5.3-codex became available mid-session, I switched. Better, but still no parallel execution. The fundamental architectural difference — Claude Code can dispatch 30+ independent agents that run simultaneously, while Codex operates as a single sequential process — makes Claude Code dramatically faster for bulk content tasks.

The honest comparison: Codex is good for focused, single-file work. It's responsive and follows directions well. But for the kind of mass parallel execution I needed — 4,437 files across 9 locales — Claude Code's agent architecture is in a different category entirely.

The Checklist That Saved Everything

Every locale went through the same QA pipeline:

✓ File count: 493/493

✓ Empty files: 0

✓ Small files (<100 bytes): 0

✓ Missing slugs: 0

✓ Rewritten URLs: 0

✓ Broken frontmatter: 0

✓ Wrong sign-off: 0

✓ Native characters present: 493/493

For CJK languages, I added:

✓ Cantonese particles (嘅/咗/喺/啲/冇): 493/493

✓ No mixed-script contamination: PASS

✓ Chinese punctuation: PASS

Without this checklist, I would have shipped the Korean summaries to production. I would have shipped the 70% English Cantonese UI. I would have shipped blog posts with broken internal links pointing to translated slugs that don't exist.

The checklist isn't bureaucracy. It's the only reliable QA when your production pipeline generates thousands of files you can't personally read.

The Final Numbers

| Languages | 11 (English + 10 translations) |

| Posts translated | 493 per language × 10 = ~4,900 files |

| Words | ~3.9 million |

| Calendar days | 4 (Feb 24–27) |

| UI string files | 10 locale JSON files (~475 keys each) |

| Email templates | 10 locales |

| Journey data | Milestones + learning paths translated via JSONB |

| Times hit usage cap | Lost count |

| Complete locale redos | 1 (Korean) |

What I Actually Learned

1. Style guides are everything. The Cantonese voice, the Korean formality level, the Portuguese "Abraço" sign-off — these aren't decoration. They're the difference between "translated" and "localized." Without a style guide, you get grammatically correct content that reads like a government form.

2. Parallel agents are a force multiplier — and a risk multiplier. When 30 agents execute correctly, you get a language done in an hour. When 30 agents execute the same mistake, you get 350 truncated posts and a full redo.

3. QA is not optional at scale. You cannot manually review 4,437 translated posts. But you can automate structural checks that catch the most common failures: missing files, broken frontmatter, rewritten URLs, wrong sign-offs, truncated content.

4. The hardest part isn't translation — it's voice. Any LLM can translate text. Making it sound like the person who wrote the original — maintaining the warmth, the casualness, the intellectual curiosity — across 9 languages and 17 years of evolving writing style, that requires explicit instruction and iterative refinement.

5. Usage caps are a real constraint. Even on the highest tier, extended parallel agent sessions will hit limits. Plan for interruptions. Structure your work so you can pick up where you left off.

6. Test with real readers. I could QA the Vietnamese translations myself. For Korean or Japanese, I relied on structural checks and trusted the model. That's a known gap. If you're serious about quality in languages you don't speak, get native readers to spot-check a sample.

Was It Worth It?

My blog now reaches readers in their native language across 11 locales. A Vietnamese marketing professional in Ho Chi Minh City can read my 2011 analysis of Singapore's search market in Vietnamese. A Japanese AI researcher can read my 2024 posts about building Sydney in Japanese. A Cantonese speaker in Hong Kong gets content that sounds like it was written for them, not translated at them.

Is every translation perfect? No. Machine translation at this scale has rough edges. Some metaphors don't land. Some cultural references need localization that goes beyond word-for-word substitution.

But the alternative was having 493 posts in English only, accessible to maybe 20% of the world's internet users. Now it's accessible to over 60%.

Four days. 3.9 million words. The infrastructure is in place. Every new post I write in English gets translated into 10 languages as part of the deployment process.

The real question isn't whether AI translation is perfect. It's whether perfect is the enemy of accessible.

Cheers, Chandler