The Code Was the Easy Part of Shipping Prova

Prova started feeling real to me when the product began making real promises about progression, billing, auth, and state. The hard part was not generating code. It was building the contracts around it.

509 posts about AI, learning, and building products

Prova started feeling real to me when the product began making real promises about progression, billing, auth, and state. The hard part was not generating code. It was building the contracts around it.

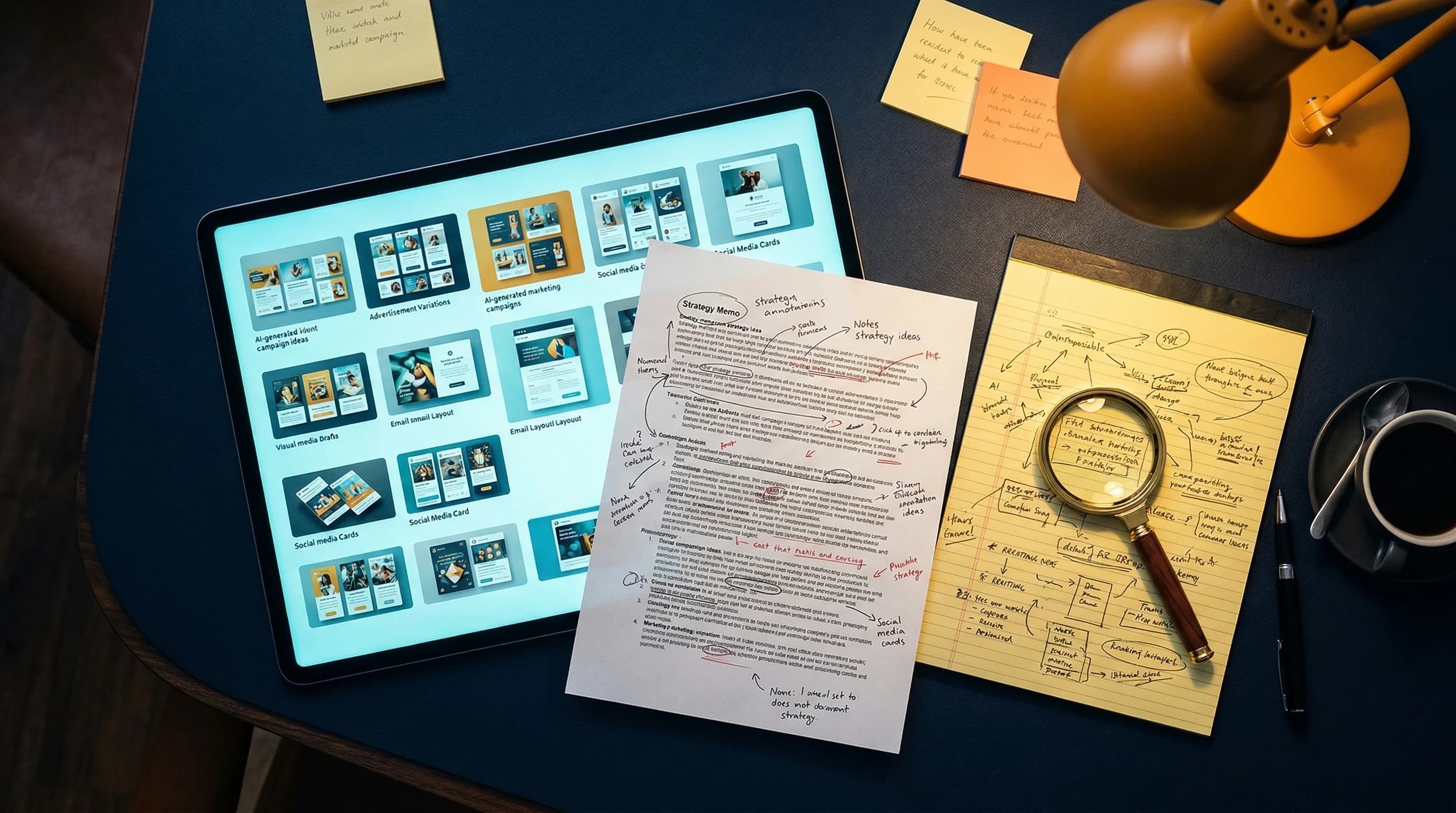

I keep seeing AI tools pitch agencies on content volume. But if you've ever managed real client relationships, you know the harder problem is trust: isolation, permissions, context, and not leaking one client's thinking into another's.

I thought I could splice together one course module, trim a few transitions, and call it a YouTube video. I was wrong. Building The Parade Problem taught me that good repurposing is not clipping. It is redesigning the idea for a different promise, a different audience, and a different first 30 seconds.

I cancelled Claude Max after 13 months and US$1,892.38 in subscription fees. This is not a victory lap. It is a 30-day test to see whether I can keep shipping STRATUM, DIALOGUE, my course platform, and this site at the same pace with Codex as my primary tool.

Two weeks after my original comparison, both tools shipped major updates. Codex challenged my product strategy in ways Claude Code did not. Claude Code shipped Agent Teams and AutoMemory. The result: I am cutting my $200/month Max plan — and getting better output for less money.

Most conversations about AI and team design start with headcount. I think that is the wrong starting point. The better question is which functions your team needs — and those turn out to be the same whether you have four people or forty.

DIALØGUE supports 7 languages, but the real multilingual work was not translating strings. It was fixing audience-local dates, TTS consistency, UI language drift, and deciding where quality mattered enough to slow down.

I spent years in advertising watching teams confuse motion with progress. Then I started building AI marketing tools and realized the problem was getting worse: faster execution, weaker judgment.

AI can now produce media plans, performance summaries, measurement frameworks, and campaign setups at impressive speed. The problem is not that the output is obviously bad. The problem is that it is often good enough to pass a casual review while missing the business context that actually matters.

Most teams still ask which model to use. From my experience, that is no longer the main question. If your AI system forgets the client or the brand, the category, and what good looks like, the smartest model in the world still starts every conversation from zero.

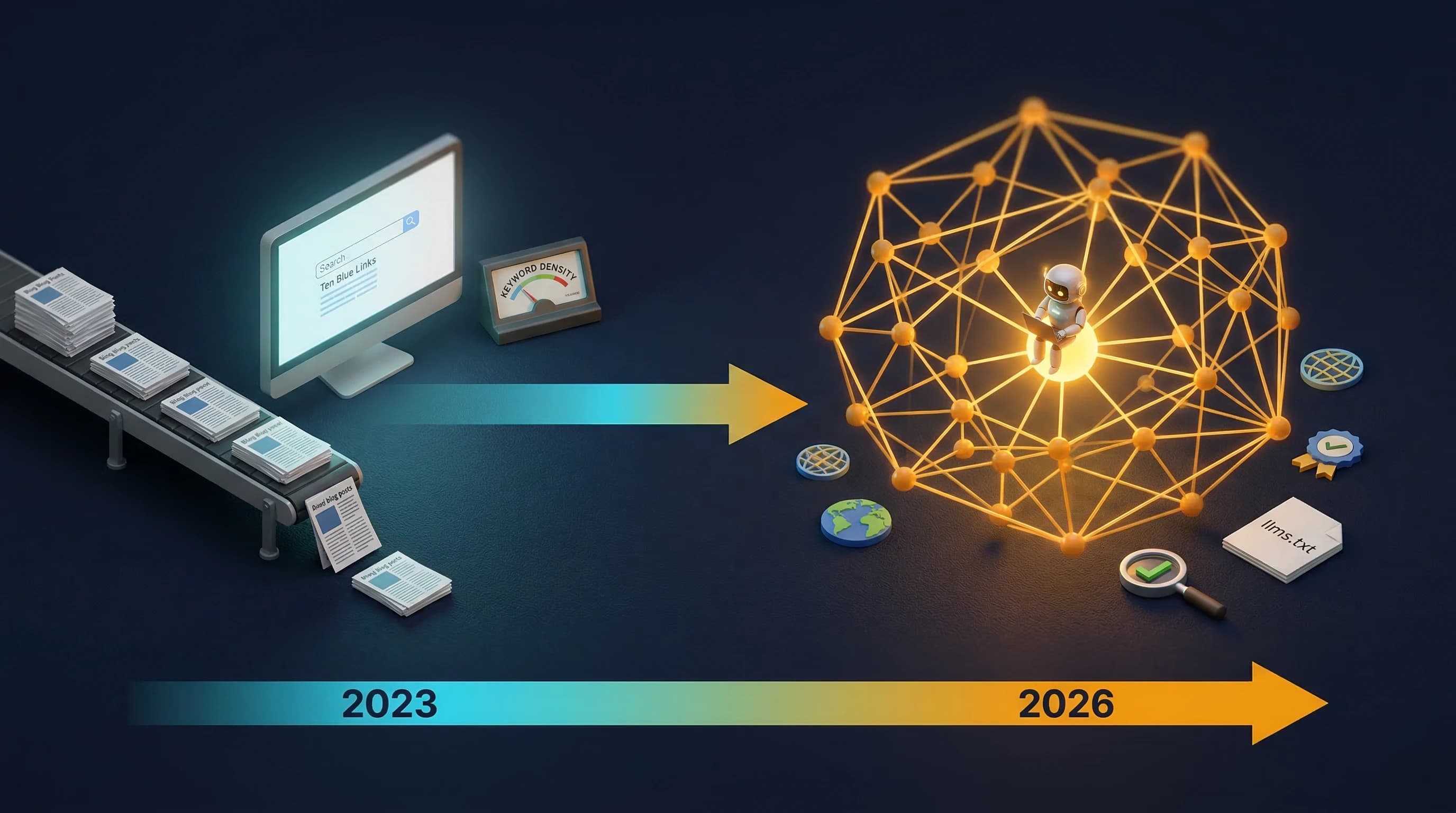

In 2023, I thought generative AI would flood search with cheap content and reduce the return on SEO. Three years later, that happened. But the bigger shift is that content production is no longer the moat. Structure, trust, QA, localization quality, and answer-engine visibility are.

One person. Seven modules. Three hours of video. Sixteen templates. A custom slide pipeline with 18 layout types. Professional voice clone. All while keeping my day job as VP. This is what the AI-first operating model looks like when you apply it to yourself.