How I Built a 7-Module Course Solo While Working Full-Time

One person. Seven modules. Three hours of video. Fifteen templates. A custom slide pipeline with 18 layout types. Professional voice clone. All while keeping my day job as VP. This is what the AI-first operating model looks like when you apply it to yourself.

I keep telling people the AI-first operating model lets a small team produce at a level that used to require a much larger one. Then I realized I should probably prove it.

So here's the story of how I built "AI-Native Media Operations: From Workflow to Operating Model" — a 7-module, ~3-hour video course with 15 templates, companion guides, a 50-page deep-dive PDF, and executive resources — while working full-time as a VP.

I'm sharing this not to impress anyone, but because the production process itself is a case study of the operating model the course teaches. And because I think people underestimate what's possible with one person and the right AI tools — while also overestimating how easy it is.

The Pipeline

The course production pipeline has four phases. Each one is AI-augmented, and each one required real human judgment at specific points.

Phase 1: Content & Slides

I wrote the course content in Markdown — one file per module, with a specific format: **On screen:** for what the audience sees, **Speaker notes:** for the voiceover script, and **Companion notes:** for the written companion that goes deeper than the video can.

The slide rendering uses a custom pipeline I built: Markdown → 18 different layout types (title, flow-diagram, stat-callout, two-column, checklist, before-after, timeline, and more) → rendered HTML with a warm editorial design system.

What AI handled: Drafting initial slide content from my outlines, suggesting layout types, generating the CSS and rendering code.

What required human judgment: Every content decision. Which frameworks to include and which to cut. How to sequence the argument. What's too much for a slide and belongs in the companion guide instead. The design system itself — choosing warm light mode over the dark-mode default, the color palette, the font pairing.

Phase 2: Voice

The narration uses ElevenLabs Professional Voice Clone — my actual voice, cloned from samples I recorded. It's not a generic AI voice. It's my voice, generated from the speaker notes I wrote.

The pipeline generates audio with word-level timestamps, which Phase 3 uses to sync slide transitions to the narration. Slides with progressive reveals (bullet lists, checklists, flow diagrams) advance fragment by fragment, timed to the words being spoken.

What AI handled: All audio generation, word-level timestamp extraction, silence detection as fallback.

What required human judgment: The speaker note writing. Every voiceover script went through multiple revisions — not because the AI couldn't generate it, but because "technically correct" and "sounds like something I'd actually say" are different things. I also had to tune voice settings: stability, similarity, style, speed. The first attempts sounded robotic. It took several iterations to find settings that sounded natural.

Phase 3: Video Assembly

Screenshots of each rendered slide + the corresponding audio segments → assembled into final MP4 videos. The fragment sync system splits audio at natural word boundaries so progressive reveals feel timed to the narration, not arbitrarily chopped.

What AI handled: The entire assembly pipeline — screenshot capture, audio splitting at word boundaries, ffmpeg assembly, silence padding.

What required human judgment: Reviewing the final videos. Catching slides where the fragment timing felt wrong. Identifying transitions that needed voiceover smoothing. About 29 transition fixes across all 7 modules in the last round alone.

Phase 4: Materials

Fifteen templates, a 50-page deep-dive guide, companion guides for each module, executive resources (board presentation template, delegation guide, ROI worksheet, executive briefs).

What AI handled: First drafts of most templates, companion guide structure, formatting.

What required human judgment: All content decisions. The Workflow Audit template isn't a generic AI output — it's designed from 20 years of watching teams audit their workflows and get it wrong. The ROI Worksheet includes real cost data from my own products because I didn't want to invent numbers. Every template got multiple revision passes.

What It Actually Cost (Time)

I don't have an exact hour count because I worked on this in evenings and weekends over several months, alongside my full-time VP role. But here's the rough breakdown:

- Content writing and revision: The most time. Weeks. The course content went through multiple review cycles — external reviewers gave feedback that changed the structure of Modules 6 and 7 significantly.

- Slide pipeline development: The rendering system, layout types, and design system took time to build — but they're reusable for future courses.

- Audio generation: Fast once voice settings were tuned. An hour or two per module for generation + spot-checking.

- Video assembly: Mostly automated. Review time was the bottleneck, not generation time.

- Templates and materials: Several days for the full set.

If I had hired a production team — designer, video editor, voice talent, template designer — this would have cost tens of thousands of dollars and taken months of coordination. Instead, it cost API credits and my time.

The 60/40 Split

In a blog post last month, I wrote about the 60/40 principle: AI gets you about 60% of the way, and the remaining 40% is human refinement. Building this course confirmed it.

The AI handled production — rendering, audio generation, video assembly, first drafts. That's the 60%. The human handled judgment — content decisions, design taste, quality review, revision after revision. That's the 40%.

The 40% is where all the value lives. Without it, this would be an AI-generated course that's technically complete and experientially hollow. With it, every slide has a reason to exist, every speaker note sounds like something I'd actually say in a meeting, and every template is designed for someone to actually use on Monday morning.

Why I'm Telling You This

Because the course teaches an AI-first operating model, and I think it's fair to show that I practice what I teach.

I disclosed the production method in the course itself — there's a transparency slide in Module 1 that says exactly how the course was made. Voice is PVC. Slides are a custom pipeline. Companions co-written with Claude. I'm not hiding any of this.

If one person can produce a 7-module course while working full-time as a VP, your team of 20 can do dramatically more than you think with the same operating model. The tools are the same. The leverage is greater.

That's the thesis. This course is the proof.

What I'd Do Differently

- Start with the design system, not the content. I designed the slide system partway through production and had to retrofit earlier modules. Next time: design system first, then write content to fit it.

- External review earlier. The reviewer feedback that reshaped Modules 6-7 came late in the process. If I'd gotten that feedback after Module 3, the whole course would be tighter.

- Speaker notes are harder than slides. I underestimated how much revision the voiceover scripts would need. "Write clearly" and "write for spoken delivery" are different skills.

That's it from me. If you're thinking about building a course, a knowledge product, or any content-heavy project — the tools are there. The operating model works. Just budget for the 40%.

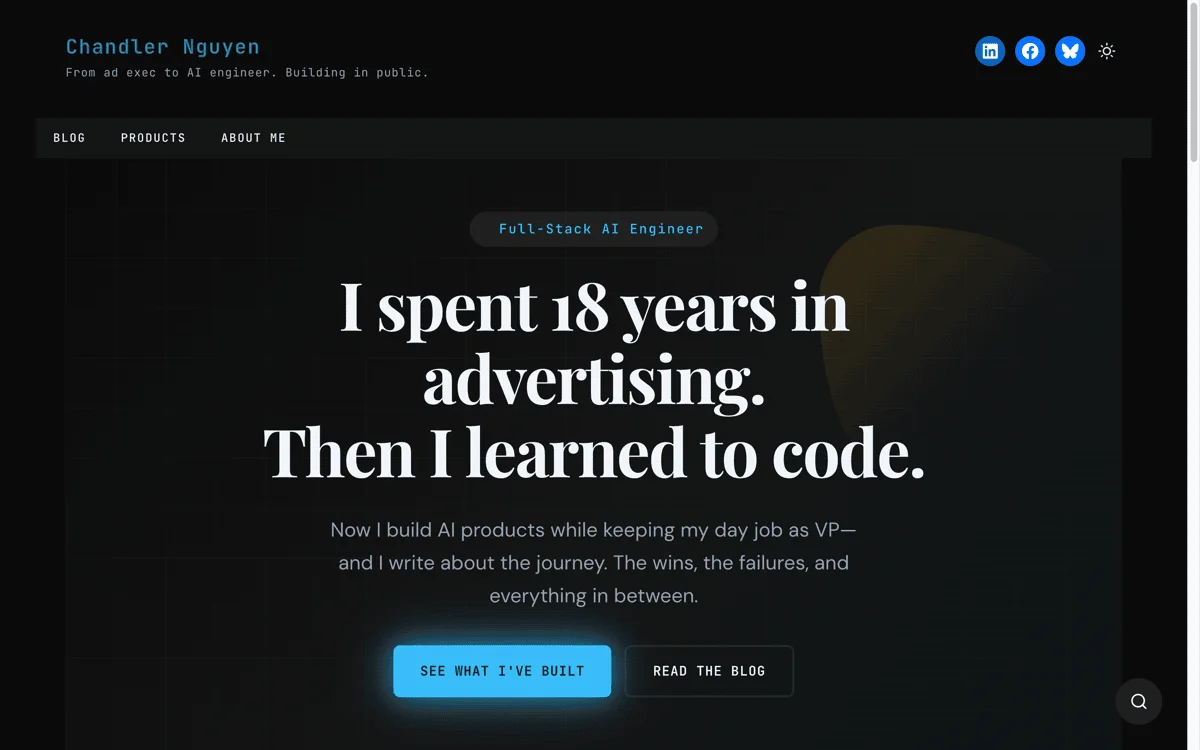

Cheers, Chandler