Have you ever tried to use text-to-image AI tools to create art and failed miserably? Well, that’s exactly what happened to my daughter and me.

Like many of you, I have seen many Youtube videos and read many online articles about how easy it is to create art and full stories (with illustrations) using text-to-image AI tools. Some influencers (including VCs) on podcasts even suggested that they would make children’s books with their kids over the weekend. It sounds simple enough, right? Especially since I have been playing with Stable Diffusion (mainly via Dream Studio) for some time now. So “naturally,” I told my daughter that it would be fun to work together, to turn her story (Inner truths) into a book with illustrations.

After a few long days of trying, the result has been disappointing! So I write this post with two purposes:

- To share our experiences

- To learn from the wisdom of the internet what I can do to improve the situation and not to disappoint my daughter.

Tools that we are using

We have been using mainly Midjourney and Stable Diffusion (via Dream Studio and Outpaininting). I am sure there are existing professional tools that can generate beautiful illustrations because we have seen amazing work from Disney, Marvel, and other companies. But the point of many articles or videos about AI Art is that you can create by using mass market tools too. 🙁 It’s overhyped.

It is relatively easy to come up with the main character’s face

With some guidance, it was pretty easy for my daughter to create the main character’s face for her story. You can see from the two images below that my daughter has very specific details about her main character.

The first image was created within 20 mins, and the second was created within the next hour or so using Midjourney. The description (or prompt) is around: “Avila Abrams, a girl with little curly hair and it is a very dark brown color, green eyes with a hint of blue, light freckles, a loose white sweater with grey stripes, light bags under her eyes, a little frown on her face, a sharp v-shaped face, and she is wearing headphones in her ears.“

The second image is the final version we chose.

Then we got stuck

With the main character’s face done, we want to generate the rest of her look and put her into the first scene. My daughter wants her character, Avila, to wear a loose white sweater with grey stripes, dark blue skinny jeans. But we can not generate that image with her face remaining the same as the picture above. I have been watching the latest videos from “Tokenized AI by Christian Heidorn” but still, we have tried prompt like:

- /imagine [URL] description

- /imagine wide angle shot, description –seed [seed number]

- /imagine [URL] wide angle shot, full body image, description –seed [seed number]

- /imagine [URL] full body image, wide angle shot, description

- etc.

And they all failed.

After that, I tried to upload Avila’s face to Dream Studio and generate her full body image from there but fail. We can’t keep the main features of her face the same to a reasonable degree.

Then I did more research and came across this video from Prompt Muse. She talked about a combination of “Thin Plate Motion Colab Notebook”, “Out Painting” and “Dreambooth”. I got stuck halfway through Thin Plate Motion with some errors that I can’t figure out (well I am not a coder :|). As for Out Painting, it is based on Stable Diffusion, but the interface is very clunky. The output is not what we are looking for after many times.

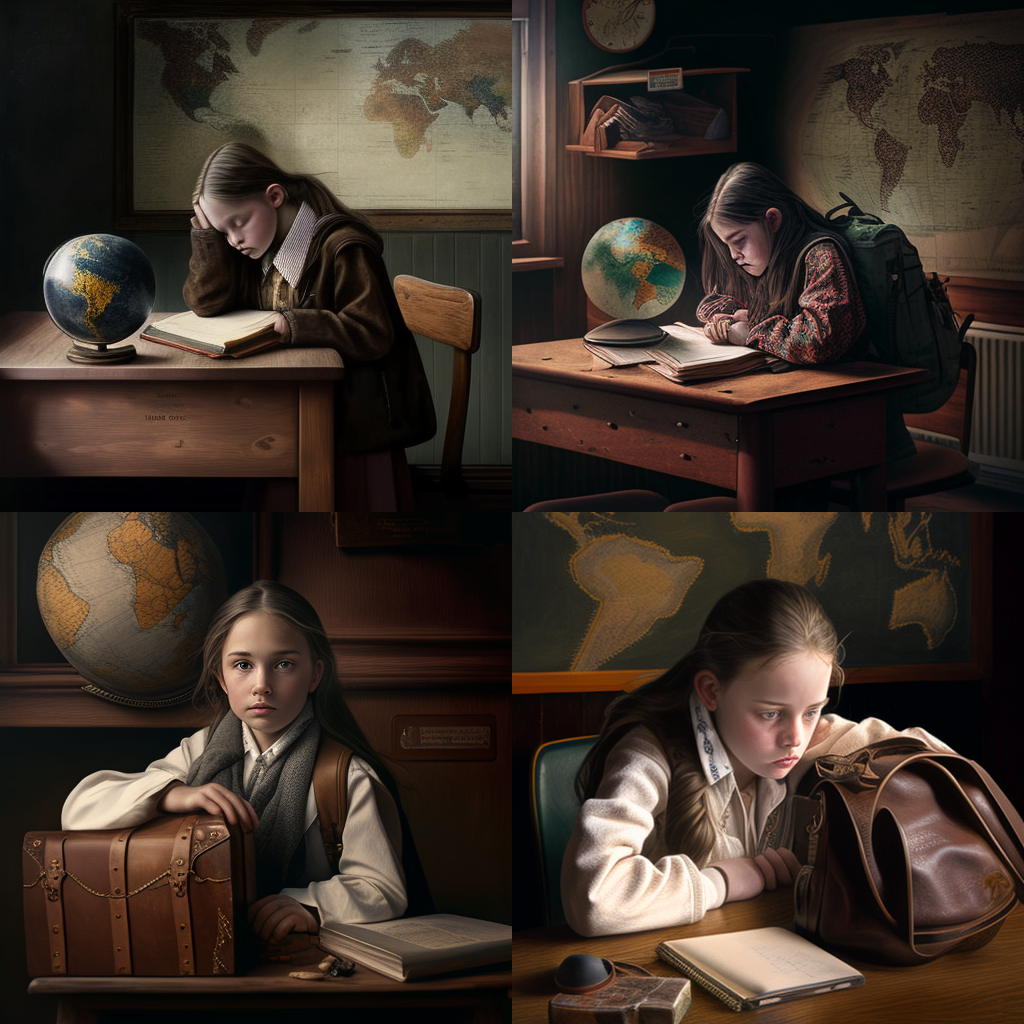

The first scene that my daughter wants to have is “Avila in a modern middle school geography classroom, wearing an olive green waterproof jacket and dark blue skinny jeans, walking away from her desk, one of the girl’s hands on a dark brown leather bag.” But these are the outputs; none are what we are looking for. You can see with certain outputs, somehow the machine uses a comic style, which is not what we are asking for.

We tried to blend two images together and see what happened

Then I had an idea of how to generate the character’s full body image first, with the right camera angle, and then blended that with a detailed classroom image. Well, we haven’t managed to make that work either. The character’s face/look differ so much. The machine can’t handle the level of detail my daughter imagines for the classroom. T.T

And this is just the first scene of the story 🙁

I tried Bing Chat, but well, it doesn’t work

I asked Bing Chat to tell me how I can do this via Midjourney or Stable Diffusion, with a step-by-step guide, and what it offers is no different from the above.

Help

So what are we doing wrong? I want it to be a fun project with my daughter. But we are stuck!

Also, my conclusion is that these tools are not ready for the mass to use. They can generate a single image well but not a series of images. It is not easy to control the direction of your character’s face, and the “camera angle” of the image, especially if the angle is not like a wide-angle or top-down angle. My daughter has in her imagination a very detailed scene. These tools can’t create that for us.

Tell me in the comments what we should do?

Last but not least, our ask to Mid Journey or Stable Diffusion or similar companies: can you make live easier for us? Give us the option to keep the character’s main features constant and be able to put the character in different scenes easier. Right now, it is too hard T.T

Chandler

[jetpack_subscription_form]